2026 AI Hardware Trends: The Memory Wall & Component Selection

4/20/2026 3:10:23 PM

With the explosion of AI servers and edge computing in 2026, traditional component selection is failing. Are your designs ready for the heat?

The year 2026 marks a definitive paradigm shift in the artificial intelligence hardware landscape. As large language models (LLMs) evolve from the training phase into massive, real-world deployment, the industry has moved beyond the singular pursuit of raw GPU compute power. We have officially entered the era of "system-level effective compute," where the primary bottleneck is no longer how fast a chip can calculate, but how efficiently it can access and move data. This fundamental constraint, known as the "Memory Wall," has redefined the rules of hardware competition, making memory bandwidth, capacity, and system-level integration the most critical factors in AI infrastructure.

The Memory Wall: The Defining Challenge of 2026

The "Memory Wall" refers to the growing disparity between the exponential increase in processor speeds and the comparatively sluggish improvement in memory access times. In the context of 2026's trillion-parameter generative AI and agentic workflows, this bottleneck is crippling. Modern AI inference-particularly the autoregressive "decode" phase where models generate text token by token-is intensely memory-bandwidth-limited. Even the most powerful GPUs spend a significant amount of time idling, waiting for data to be fed from memory. Consequently, hardware architects and data center operators are forced to prioritize memory hierarchy over pure FLOPS (floating-point operations per second).

The "Memory Wall" refers to the growing disparity between the exponential increase in processor speeds and the comparatively sluggish improvement in memory access times. In the context of 2026's trillion-parameter generative AI and agentic workflows, this bottleneck is crippling. Modern AI inference-particularly the autoregressive "decode" phase where models generate text token by token-is intensely memory-bandwidth-limited. Even the most powerful GPUs spend a significant amount of time idling, waiting for data to be fed from memory. Consequently, hardware architects and data center operators are forced to prioritize memory hierarchy over pure FLOPS (floating-point operations per second).

HBM4 and the Battle for Bandwidth

To breach the Memory Wall, High Bandwidth Memory (HBM) has transitioned from a niche luxury to an absolute strategic necessity. In 2026, the industry standard has shifted to HBM4. Utilizing advanced 3D stacking technology, HBM4 stacks up to 16 layers of DRAM dies directly onto the GPU logic die using Through-Silicon Vias (TSVs). This vertical integration drastically shortens the data path, delivering unprecedented bandwidth speeds exceeding 3TB/s per stack.

To breach the Memory Wall, High Bandwidth Memory (HBM) has transitioned from a niche luxury to an absolute strategic necessity. In 2026, the industry standard has shifted to HBM4. Utilizing advanced 3D stacking technology, HBM4 stacks up to 16 layers of DRAM dies directly onto the GPU logic die using Through-Silicon Vias (TSVs). This vertical integration drastically shortens the data path, delivering unprecedented bandwidth speeds exceeding 3TB/s per stack.

However, the demand for HBM has triggered a severe supply-side crisis. Major manufacturers like SK Hynix, Samsung, and Micron have allocated over 70% of their advanced DRAM capacity to HBM production. This has created a "capacity squeeze," leading to skyrocketing prices and extended lead times. For component selectors in 2026, securing a steady supply of HBM4-equipped accelerators is the top priority, often dictating the entire system's availability.

The Rise of Agentic AI and CPU Bottlenecks

A significant trend in 2026 is the rise of "Agentic AI"-autonomous agents capable of planning, reasoning, and executing complex, multi-step tasks. Unlike simple chatbots, these agents require massive system-level resource orchestration. This shift exposes a new bottleneck: the Central Processing Unit (CPU).

A significant trend in 2026 is the rise of "Agentic AI"-autonomous agents capable of planning, reasoning, and executing complex, multi-step tasks. Unlike simple chatbots, these agents require massive system-level resource orchestration. This shift exposes a new bottleneck: the Central Processing Unit (CPU).

Traditional AI narratives focused heavily on GPUs, but Agentic AI relies on the CPU to manage the workflow, handle non-parallelizable logic, and coordinate between different models and tools. As a result, the CPU's role has been elevated from a supporting actor to a co-lead. Hardware selection in 2026 now demands high-core-count, high-frequency CPUs with robust PCIe lane configurations to prevent starving the GPUs. We are seeing a resurgence in the strategic importance of server-grade CPUs, with manufacturers optimizing specifically for AI orchestration workloads.

SSDs: From Passive Storage to Active Inference Partners

The role of the Solid State Drive (SSD) is undergoing a radical transformation. With AI agents maintaining "long context" windows (often exceeding 1 million tokens) to remember vast amounts of prior interactions, the memory required to store Key-Value (KV) caches is exploding. Storing all this active context in expensive HBM or even standard DRAM is economically unfeasible.

The role of the Solid State Drive (SSD) is undergoing a radical transformation. With AI agents maintaining "long context" windows (often exceeding 1 million tokens) to remember vast amounts of prior interactions, the memory required to store Key-Value (KV) caches is exploding. Storing all this active context in expensive HBM or even standard DRAM is economically unfeasible.

Enter the AI-optimized SSD. In 2026, high-performance NVMe SSDs are being used as an extension of the memory hierarchy. Technologies like NVIDIA's Inference Context Memory System (ICMS) allow systems to offload inactive KV caches from GPU memory to ultra-fast SSDs. This turns the SSD into an active participant in the inference process, acting as a massive, low-latency reservoir for context. Component selection now favors enterprise SSDs with specific features like low queue-depth latency optimization and high endurance, rather than just raw sequential read/write speeds.

System-Level Integration: Interconnects and Cooling

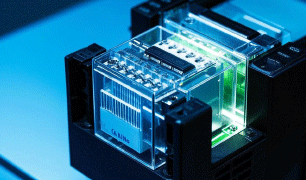

Finally, breaking the Memory Wall requires looking beyond individual components to the system architecture. As clusters scale to tens of thousands of GPUs, the interconnect becomes the nervous system of the AI brain. Copper traces are reaching their physical limits, leading to the accelerated adoption of Co-Packaged Optics (CPO) and 1.6T optical modules. By moving optical engines closer to the switch ASICs or GPUs, CPO significantly reduces power consumption and latency, ensuring that data flows freely between nodes.

Finally, breaking the Memory Wall requires looking beyond individual components to the system architecture. As clusters scale to tens of thousands of GPUs, the interconnect becomes the nervous system of the AI brain. Copper traces are reaching their physical limits, leading to the accelerated adoption of Co-Packaged Optics (CPO) and 1.6T optical modules. By moving optical engines closer to the switch ASICs or GPUs, CPO significantly reduces power consumption and latency, ensuring that data flows freely between nodes.

1.The HBM & LPDDR Supply Crunch

To feed the insatiable appetite of next-gen AI platforms like NVIDIA's Vera Rubin, the demand for High Bandwidth Memory (HBM) and high-capacity LPDDR has skyrocketed. In fact, a single AI server rack can now consume over 50TB of LPDDR5X memory-equivalent to the total memory of roughly 4,500 high-end smartphones. This extreme demand has forced major manufacturers like SK Hynix, Samsung, and Micron to divert over 80% of their advanced production capacity to AI-specific chips, creating a severe global shortage and driving prices up dramatically for consumer electronics.

To feed the insatiable appetite of next-gen AI platforms like NVIDIA's Vera Rubin, the demand for High Bandwidth Memory (HBM) and high-capacity LPDDR has skyrocketed. In fact, a single AI server rack can now consume over 50TB of LPDDR5X memory-equivalent to the total memory of roughly 4,500 high-end smartphones. This extreme demand has forced major manufacturers like SK Hynix, Samsung, and Micron to divert over 80% of their advanced production capacity to AI-specific chips, creating a severe global shortage and driving prices up dramatically for consumer electronics.

2. Architectural Innovations: Breaking the Limits

Engineers are fighting back against these physical limits with groundbreaking architectural shifts:

Engineers are fighting back against these physical limits with groundbreaking architectural shifts:

3.The Algorithm Counter-Attack

Interestingly, hardware isn't the only frontier. Google recently shook the market with its "TurboQuant" algorithm, which uses advanced math to compress AI memory (KV Cache) requirements by 6x without losing accuracy. While this briefly panicked memory stock prices, the consensus is clear: such optimizations don't reduce the need for memory-they simply allow us to run vastly larger models and longer contexts on the hardware we have.

Interestingly, hardware isn't the only frontier. Google recently shook the market with its "TurboQuant" algorithm, which uses advanced math to compress AI memory (KV Cache) requirements by 6x without losing accuracy. While this briefly panicked memory stock prices, the consensus is clear: such optimizations don't reduce the need for memory-they simply allow us to run vastly larger models and longer contexts on the hardware we have.

What's Next?

As we look toward 2027 and 2028, keep an eye on emerging tech like High Bandwidth Flash (HBF) and Intel's Z-Angle Memory (ZAM). In 2026, if you want to understand the future of AI, stop looking at the processor-and start looking at the memory.

AI power modules now demand stricter standards. We are seeing a new baseline requiring a 30% increase in ripple current ratings for Tantalum capacitors and power inductors to handle massive current spikes without saturation or overheating.

Specifically, the "sky-high price" of HBM is driven by a combination of factors across the following dimensions:

1. Extreme Technical Complexity and High Manufacturing Costs

HBM is a rare product in the memory industry that is both highly "profitable" and "expensive." Its manufacturing faces the highest level of difficulty across nearly every link in the value chain:

HBM is a rare product in the memory industry that is both highly "profitable" and "expensive." Its manufacturing faces the highest level of difficulty across nearly every link in the value chain:

2. Severe Supply-Demand Imbalance and Capacity "Crowding-Out Effect"

3. Astonishing Premium Pricing Power and Profit Margins

HBM's pricing logic has completely detached from traditional memory. Although its physical manufacturing cost is only about 3 to 4 times that of standard DDR memory, its selling price is 6 to 8 times higher due to its irreplaceable performance and extreme scarcity.

HBM's pricing logic has completely detached from traditional memory. Although its physical manufacturing cost is only about 3 to 4 times that of standard DDR memory, its selling price is 6 to 8 times higher due to its irreplaceable performance and extreme scarcity.

In 2026, "derating" isn't just a suggestion-it's a survival strategy and intelligent management and proactive maintenance. Adopting derating designs to ensure stability under extreme power density. Don't let basic components bottleneck AI innovation.

In summary, the AI hardware trends of 2026 are defined by the urgent need to dismantle the Memory Wall. The market has shifted from a GPU-centric view to a holistic system perspective. Success in this environment depends on a balanced configuration: leveraging HBM4 for raw bandwidth, utilizing powerful CPUs for agentic orchestration, employing SSDs for massive context management, and underpinning it all with optical interconnects and liquid cooling. For engineers and decision-makers, the key to component selection this year lies not in chasing the highest benchmark score, but in building a cohesive, memory-efficient ecosystem capable of sustaining the heavy demands of next-generation AI.